MAIN RESULTS OF THE PROJECT

Development of a complete and operational prototype incorporating all planned features.

With regard to the primary objective of our work—the development of a proactive multimodal interaction system within the context of human-robot interaction—our major contributions are:

(i) An audio-visual temporal fusion model for multi-user speaker diarization, based on a model of the fusion of audio and visual cues through spatial coincidence of the locations of visual and sound sources. This model is computationally lightweight and capable of adapting to acoustic conditions without a training phase;

(ii) The definition of the IAB concept for HRI, or Interaction Acceptance Belief, which answers the question “What are the chances that my interaction will be accepted by the target user?” Analysis reveals that gaze direction is a key determinant for predicting the IAB;

(iii) A deep learning-based approach for context-aware emotion recognition (CAER) (people, objects, location, etc.). This is a highly innovative “bottom-up” approach that processes all present individuals simultaneously, rather than sequentially. An open-source ROS version enabled the integration of the model into the µDialBot framework designed for the Pepper robot;

(iv) An LLM-based agentic approach effectively replaces the traditional modular decision chain for robot control. By unifying dialogue management, action planning, and nonverbal communication into a single prompt-guided policy, it reduces design costs and complexity while maintaining or exceeding the performance of the previous system;

(v) A proactive multimodal HRI architecture, FlowAct, featuring a continuous perception flow and modular action subsystems. Based on three stages—perception, representation, and decision—it operates using submodules organized by controllers;

(vi) The implementation of the complete interaction system in ROS and on the Pepper social robot.

The contributions regarding the project’s second major objective—testing and evaluating this architecture in a hospital waiting room scenario—are:

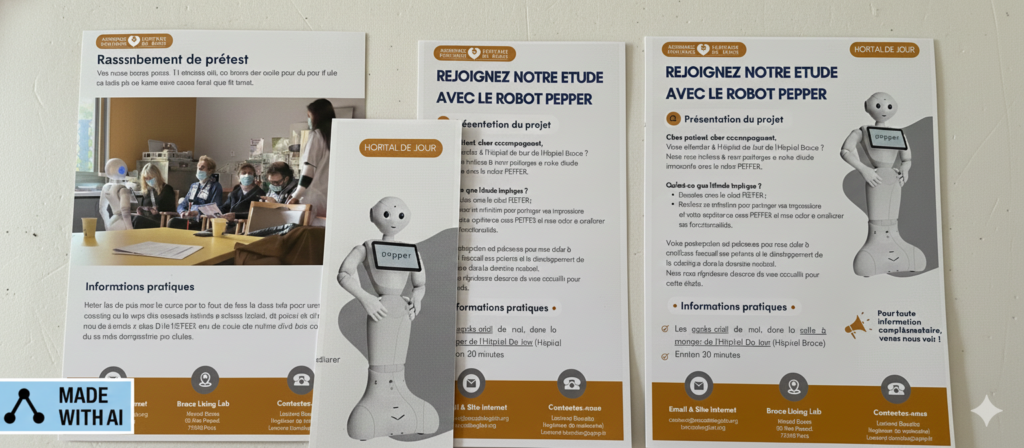

(i) A controlled exploration experiment of proactive HRI that simulates behaviors in a hospital waiting room. This includes an evaluation of the user experience via the UMUX questionnaire, which indicates a satisfactory user experience, and a verification of the real-time operation of the FlowAct implementation;

(ii) Conducting tests of the device in a real-world setting at Broca Hospital, as well as gathering feedback on the device’s usability and acceptance among hospital patients through the SUS and AES questionnaires. However, the user experience score indicates a moderate level of usability.

For further information, please contact muDialBot’s coordinator:

For further information, please contact muDialBot’s coordinator: